To increase collaborative robots, or cobots, capabilities and broaden their application range, robotics companies are leveraging advances in both vision and motion control systems. One of the most important recent developments in vision technology has been 3D vision.

“3D vision systems are becoming very easy to integrate into robot applications and provide much better information for a robotic application than a 2D vision system ever could,” said David Bruce, manager of intelligent products & vision at FANUC America.

3D scanning gives cobots better perception

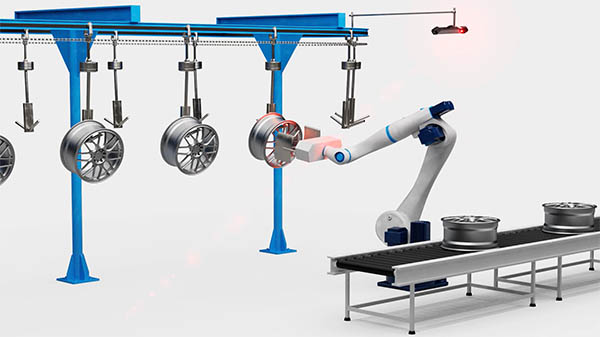

One advancement in vision systems that enhances cobot capabilities is 3D scanning in motion.

“Until recently, the majority of applications using collaborative robots and 3D vision have been limited to static scenes,” said Frantisek Takac, a strategic partners manager at Photoneo. “To recognize an object, navigate to it, inspect it, and pick it, a robot must get the object’s accurate 3D reconstruction, with exact X, Y, and Z coordinates that define the object’s position.”

“The new technology of parallel structured light is based on a unique sensor architecture that acquires the image in one snapshot, as opposed to the sequential scanning of a standard image sensor. This means that the parallel structured light method practically freezes the 3D scene in time, Takac added.

That enables the robots to see, he said, and gives them the capability to “manipulate objects in 3D space or evaluate their quality during manufacturing.” That’s a “big game changer,” he added, because this can happen quickly and accurately.

Another development that helps to enhance vision precision is the inclusion of artificial intelligence (AI) tools in smart cameras.

“Recent innovations in smart cameras enable AI detection filters for part defects and inspection for glue beads, grease, and welds applied by the cobot itself,” said Adrian Choy, product manager of robotics at Omron Automation America. “These features are often coupled with intuitive software wizards that accelerate the development of vision solutions.”

Motion control ranges from motors to hand guidance

Advances in several areas of motion control are also helping cobots to take on more difficult and sophisticated applications.

With increasing demand for greater, more efficient cobot mobility, motor manufacturers are challenged to meet market requirements for a higher torque density that pairs well with highly efficient, gearbox-like harmonic gearing.

“The improvements in motor designs have achieved higher torque density with advancements in the materials used and the manufacturing process in winding the motors,” said Yoshi Umeno, global market manager for robotics at Kollmorgen.

“For instance, by optimizing the amount of motor materials, creating the power for the motor, and reducing the excess heat from the motor, Kollmorgen’s TBM2G motor can deliver the performance to meet the market needs.”

Motion control technology has also made significant strides in safety, allowing motion control devices to be integrated with cobots without adding unnecessary levels of risk.

“Omron’s 1SA series servos can operate at configurable, safe, and limited speed and positions in instances where personnel need to approach a cobot workcell,” said Choy. “For example, a cobot mounted on a vertical seven-axis lift, driven by a servo, can be programmed to move slower or maintain safe heights to avoid striking humans when they approach the cobot.”

Motion control has also seen intriguing developments in the programming and guidance of cobot movements. One groundbreaking advancement is real-time reconstruction of a cobot’s immediate environment.

“Real-time reconstruction enables the cobot to react to situations in real-time without slowing down its operation, eliminating the risk of collision with its changing environment,” said Takac. “This is especially important in applications where multiple cobots share the same workspace or where a cobot shares its workspace with a human worker.”

Another advance in this area uses manual intervention to automate cobot movements.

“Hand Guidance is the ability of the operator to control cobot motion by guiding or leading the robot through the desired motions,” said Greg Buell, product manager for collaborative robots at FANUC America. “This is both useful in teaching applications, where the path points or paths are recorded as the user guides the robot, and also in heavy lifting applications, where the process requires manual placement or movement of a large part with the smooth and gentle motion of a robotic arm.”

A technique similar to hand guidance involves the programming of high-end controllers.

“Advanced controllers can now be programmed by teaching the environment first rather than teaching the mechanics of the pick-and-place,” said Mike DeGrace, UR+ ecosystem manager at Universal Robots. “The motion controller and software then determine the path of the robot autonomously.”

Cobots clean up heavy equipment production

A good example of how advanced motion control can open the door for an automation application can be seen in the introduction of cobots in a manufacturing operation.

Carriere Industrial Supply (CIS), a manufacturer of heavy equipment for harsh mining environments, deployed Universal Robots’ UR10e industrial cobot to make the plasma-cutting process used to produce large metal parts more efficient. Specifically, the goal of the initiative was to streamline the cutting process by reducing the extensive waste cleanup.

“Using a robotic arm, we knew that we would get a more precise cut and possibly eliminate all of the grinding and cleanup of the joints,” said Pierre Levesque, manager of innovation and technologies at Carrier Industrial Supply.

Mason Fraser, junior software engineer at Carrier Industrial Supply, and his team leveraged the Java computer-vision library BoofCV, the MonkeyEngine 3D game-engine library, and a webcam to project and line up the metal part in space, allowing the operator to know exactly where to cut every time.

Once the team started cutting, they realized that none of the parts were formed perfectly off the brake press. To correct this flaw, they developed a way to maintain a consistent cutting height above the plate, using the UR10e built-in force/torque controller and the drag nozzle of the plasma-cutting torch to drag along the surface of the plate to maintain a consistent distance while cutting.

Unfortunately, the pressure generated by the torch caused the nozzle to bounce off the plate. To solve the issue, the team wrote a custom proportional–integral–derivative controller in UR script code, and used that to compensate for the torch height with voltage feedback. This allowed the robot to keep a steady distance off the plate for every cut.

CIS realized a significant time and cost savings using the cobot and motion control system. Previously, 80 percent of the plasma-cutting time was spent cleaning up the waste from the manual cuts.

On a single large truck body contract over the subsequent three years, Levesque determined that the trimming process on that project alone would be more than 50 hours for every truck.

Moving to a cobot application reduced that time to 12 hours per truck, ultimately saving 1,000 hours and realizing a significant cost savings on this project.

About the Author

Follow Robotics 24/7 on Linkedin

Article topics

Email Sign Up