In August, Intel Corp. said it was “winding down” production of its RealSense cameras and sensors, which were used in robots and other systems. As with Amazon.com Inc.'s purchase of Kiva Systems taking Kiva's mobile robots off the market but creating opportunities for other companies, so too could the absence of Intel's RealSense help computer vision startups. In May, Orbbec said it was collaborating with Microsoft Corp. to develop new time-of-flight imaging products.

Lidar, radar, and other sensors are a large part of the expense for robots, drones, and autonomous vehicles. There has also been some market consolidation, as seen in Ouster's acquisition of Sense Photonics.

David Chen, co-founder and head of engineering at Orbbec, shared with Robotics 24/7 his ambitions for the Troy, Mich.-based company's technology to fill the void left by Intel RealSense.

How compatible is Orbbec's technology with existing products using Intel's RealSense? Is some customization necessary?

Chen: RealSense cameras powered by semi-global matching [SGM] based stereovision technology have been a popular choice with good accuracy and reasonable computation time. It’s a good and reliable choice for certain scenarios.

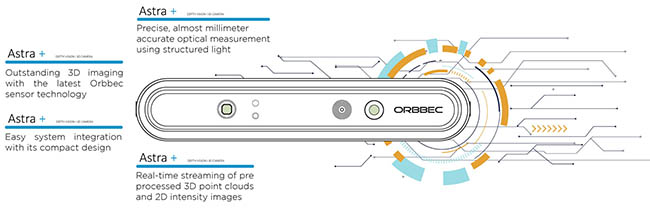

However, Orbbec has cameras powered by SGM, structured-light, time-of-flight [ToF], and deep learning sensors, allowing them to be applied in many more applications compared with RealSense.

Depending on the requirements and use cases for an original equipment manufacturer [OEM], most businesses can easily transfer from RealSense to Orbbec 3D vision system products. Both the RealSense and Orbbec cameras feature similar scanning ranges and field of view.

Beyond that, Orbbec is working to make upgrades to its cameras and plans to announce new products to help former RealSense customers make a seamless switch to Orbbec systems.

For robotics developers, how do the cost and capabilities compare with other past products?

Chen: Compared with conventional 3D vision sensing systems - which have featured a combination of image sensors, ultrasonic sensors, and other devices – and assuming that we want to achieve the same set of specs, the cost of RGBD cameras is always lower.

What sets Orbbec apart is that we have our own manufacturing line along with proprietary chips. Where some manufacturers buy supply parts and mass-assemble their cameras, Orbbec has researched and developed its own chips to create the best possible depth images for developers and businesses. This provides for a superior product and lowers the cost on large volume orders.

What are the advantages of embedded computing and use of Microsoft Azure Kinect?

Chen: Azure Kinect requires an additional cost in the form of PC that is used to read the 3D data from the embedded sensor. It limits the available applications of the camera because the product must be designed based on Kinect restrictions.

Embedded computing effectively improves the quality of the end solution of Kinect users, by expanding the applications of the camera to another level. Orbbec's ToF cameras can output high quality depth data without a PC or other external computing capability, giving users significantly greater design and application flexibility.

How closely does Orbbec work with developers on customization, or do they have multiple options to choose from?

Chen: Orbbec has different kinds of products for customers to choose from. For example, long/short-range 3D cameras, big/small cameras, and cameras with enclosures versus bare modules. We even have different IP grade products to deal with harsh industrial environments.

Orbbec can also provide customized modules depending on customer needs. We are currently supporting customers in mechanical engineering, software engineering, and algorithm optimization, as well as assembly in certain cases.

We are planning to bring more customization features and options in the near future.

Can you give some examples of robotics applications using Orbbec and Microsoft's technologies?

Chen: Orbbec is one of the few providers that has the capability to design and manufacture its own 3D cameras and build from the ground up.

We provide both embedded and stand-alone camera systems to give our customers different options when they’re building their own products. The embedded camera systems allow robotics companies to specifically create a solution that looks, finishes, and provides a better experience for the end user.

Our joint development with Microsoft is focused on designing a state-of-the-art TOF-based embedded system to suit the needs of more applications. Connecting this sensor to Microsoft’s Azure cloud platform will unleash computer vision and AI developers to build better solutions for the robotics market. This new product is still under development, with an expected release in 2022.

Could this also be useful in drones or autonomous vehicles?

Chen: Yes, similar to robotics, 3D cameras can be used for SLAM [simultaneous localization and mapping] and object avoidance.

Also, in autonomous vehicles, 3D cameras can help monitor the driver and passengers while inside the vehicle. For example, with distracted driving, depth vision cameras are a critical tool to make sure drivers don’t leave their seats and are not ignoring their role completely while the car is moving autonomously.

Where do you see the 3D sensing market going in the near future? Is it more a matter of educating the market, or further refining the technology?

Chen: The 3D sensor market is expected to boom to $9.4 billion by 2026, and a majority of that growth will take place on the B2B side. Advancements in camera efficiency are going to continue to push more markets and industries into implementing 3D cameras, both inside their products and in their operations.

In the next 5 years, tools and solutions used in industrial fields, farming, warehousing, and manufacturing will be some of the first that make automation a key part of operational cost savings. Due to this, expect those sectors to turn to embed 3D vision systems which are exponentially increasing innovation, flexibility, and integration capability and opening up new possibilities for these industries.

In the past few years, more and more consumer products have adapted to implement depth-sensing cameras. From our phones to autonomous vacuum cleaners, many everyday electronics have depth cameras inside them as a response to consumer demand for more advanced features.

This trend will continue to grow across almost every field, but two of the biggest areas of growth will be in the application of mobile devices and AR/VR solutions.

About the Author

Follow Robotics 24/7 on Linkedin

Article topics

Email Sign Up