Generative AI and “cloud-native” APIs and microservices are coming to the edge, said NVIDIA Corp. The company today announced major expansion to two frameworks in the NVIDIA Jetson platform for robotics and artificial intelligence on the edge: NVIDIA Isaac ROS has entered general availability, and it is expanding NVIDIA Metropolis on Jetson.

“Generative AI is bringing the power of transformer models and large language models to virtually every industry,” wrote Amit Goel, director of product management for autonomous machines, in a blog post. “That reach now includes areas that touch edge, robotics, and logistics systems: defect detection, real-time asset tracking, autonomous planning and navigation, human-robot interactions, and more.”

“All of these capabilities are arguably greater than anything we've done in the past 10 years in the areas of embedded computing and robotics,” said Deepu Talla, vice president of embedded and edge computing at NVIDIA, at a press briefing.

Developers must keep up with AI, market needs

AI and robotics are addressing increasingly complicated scenarios, leading to longer development cycles to build applications for the edge, according to NVIDIA. Programmers need expert skills and a lot of time to reprogram robots and AI systems “on the fly” to meet the changing needs of manufacturing lines, environments, and customers, it added.

“Generative AI offers 'zero-shot' learning — the ability for a model to recognize things specifically unseen before in training — with a natural-language interface to simplify the development, deployment, and management of AI at the edge,” said the Santa Clara, Calif.-based company.

More than 1.2 million developers and over 10,000 customers have already used NVIDIA AI and the Jetson platform, including Amazon Web Services, Cisco, John Deere, Medtronic, PepsiCo, and Siemens, NVIDIA said.

To accelerate AI application development and deployments at the edge, NVIDIA has also created a Generative AI Lab for Jetson developers to use with the latest open-source generative AI models.

Generative AI could transform industries

Generative AI can ease development by enabling users to provide more intuitive prompts and change AI models, said NVIDIA. Those models can be more flexible in detecting, segmenting, tracking, searching, and even reprogramming anything, it said.

In addition, models created with generative AI can outperform traditional convolutional neural network (CNN)-based models, NVIDIA noted.

“Legacy CNNs are rigid and rule-based and require lots of labeled data, slowing the development cycle,” said Talla. “Generative AI is generalizable and with natural-language prompts, anyone can get the right output.”

Generative AI could add $10.5 billion in revenue for manufacturing operations worldwide by 2033, predicted ABI Research.

“Generative AI will significantly accelerate deployments of AI at the edge with better generalization, ease of use, and higher accuracy than previously possible,” Talla said. “This largest-ever software expansion of our Metropolis and Isaac frameworks on Jetson, combined with the power of transformer models and generative AI, addresses this need.”

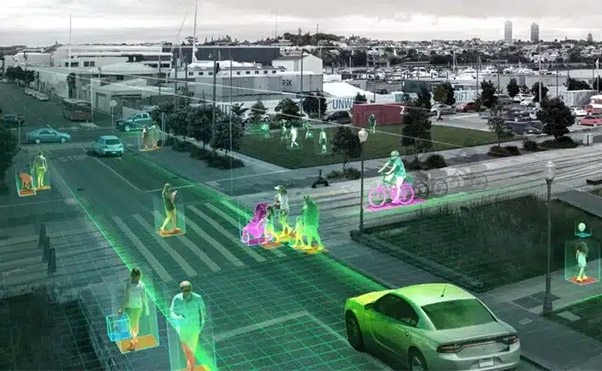

He cited potential use cases on the edge including video search and robot teaching. Real-time enhancements to NVIDIA Orin include more accurate PeopleNet transformer for detecting pedestrians, new models for detection and classification using text prompts, and a multi-modal AI visual agent for contextual searches.

Lab promises to ease edge development

The Jetson Generative AI Lab provides optimized tools and tutorials for deploying open-source large language models (LLMs), as well as diffusion models to interactively generate images. It also offers vision language models (VLMs) and vision transformers (ViTs) that combine vision AI and natural language processing to provide comprehensive understanding of a scene, explained NVIDIA.

It said developers can use the NVIDIA TAO Toolkit to create efficient and accurate AI models for edge. TAO includes a low-code interface to fine-tune and optimize vision AI models, including ViT and vision foundational models.

Developers can also customize foundational models like NVIDIA NV-DINOv2 or public models like OpenCLIP to create accurate vision AI models with very little data, said the company. In addition, TAO now includes VisualChangeNet, a new transformer-based model for defect inspection.

Metropolis includes new frameworks

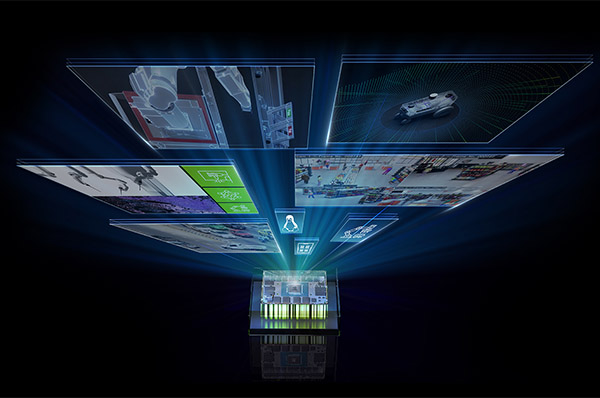

NVIDIA Metropolis is intended to make it easier and more cost-effective for enterprises to adopt vision AI-enabled systems to improve operational efficiency and safety. It has a collection of APIs and microservices for fast development of vision-based applications.

More than 1,000 companies, including BMW Group, Kroger, Tyson Foods, and Infosys, are using NVIDIA Metropolis developer tools to solve Internet of Things (IoT), sensor processing, and operational challenges with vision AI, noted NVIDIA. It said the rate of adoption is quickening, as users have downloaded the tools more than 1 million times.

NVIDIA said it plans to make an expanded set of Metropolis APIs and microservices available on Jetson by year's end. It said that hundreds of customers use Isaac to develop high-performance systems across diverse domains, including agriculture, warehouse automation, last-mile delivery, and service robotics, among others.

“For example, Metropolis could be used with cameras in a store to detect traffic and people,” said Talla. “It could generate insights and then send alerts.”

Isaac adds perception, simulation abilities

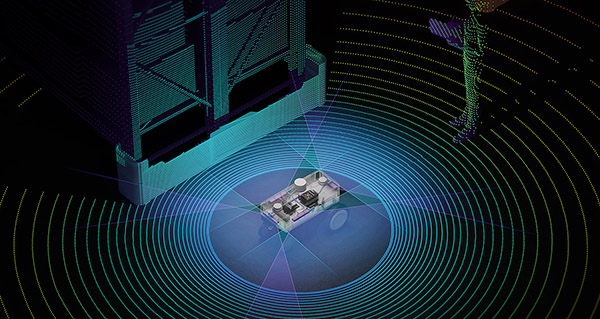

At ROSCon 2023, NVIDIA announced improvements to perception and simulation capabilities with new releases of Isaac ROS and Isaac Sim software. Built on the open-source Robot Operating System (ROS), “Isaac ROS brings perception to automation, giving eyes and ears to the things that move,” it said.

NVIDIA stated that its graphics processing unit (GPU)-accelerated GEMs enable visual odometry, depth perception, 3D scene reconstruction, and localization and planning. Robotics developers can use the tools to swiftly engineer systems for a range of applications, it said.

“With an enhanced SDG [synthetic data generation], improved ROS support, and new sensor models for generative AI, Isaac accelerates AI for the ROS community,” said Talla.

The latest Isaac ROS 2.0 release brings the platform to production-ready status, enabling developers to create and bring high-performance robots to market, explained the company.

“ROS continues to grow and evolve to provide open-source software for the whole robotics community,” said Geoff Biggs, chief technology officer of the Open Source Robotics Foundation. “NVIDIA’s new prebuilt ROS 2 packages, launched with this release, will accelerate that growth by making ROS 2 readily available to the vast NVIDIA Jetson developer community.”

New reference AI workflows to save development time

Before robots and other smart systems can be ready for production, companies need to optimize the training of AI models tailored to their specific use cases. This may include implementing robust security, orchestrating the application, managing fleets, establishing seamless edge-to-cloud communication, and more, NVIDIA observed.

The company announced a curated collection of AI reference workflows based on Metropolis and Isaac frameworks that enable developers to quickly adopt the entire workflow or selectively integrate individual components. They include network video recording (NVR), automatic optical inspection (AOI), and autonomous mobile robots (AMRs).

“NVIDIA Jetson, with its broad and diverse user base and partner ecosystem, has helped drive a revolution in robotics and AI at the edge,” said Jim McGregor, principal analyst at Tirias Research.

“As application requirements become increasingly complex, we need a foundational shift to platforms that simplify and accelerate the creation of edge deployments,” he added. “This significant software expansion by NVIDIA gives developers access to new multi-sensor models and generative AI capabilities.”

NVIDIA promises more services

NVIDIA also announced a collection of system services, which it described as “fundamental capabilities that every developer requires when building edge AI solutions.” They are designed to simplify integration into developer workflows and eliminate the need to build them from scatch, it said.

“The new NVIDIA JetPack 6, expected to be available by year’s end, will empower AI developers to stay at the cutting edge of computing without the need for a full Jetson Linux upgrade, substantially expediting development timelines and liberating them from Jetson Linux dependencies,” said the company.

“This is our biggest system software update ever,” said Talla. “Users can now bring their own kernels and distributions for easy-to-upgrade AI and compute. Previously, they had to update the whole stack. It also includes new system services and enhanced platform security.”

JetPack 6 will also rely on Linux distribution partners to expand Linux-based options, including Canonical's Optimized and Certified Ubuntu, Wind River Linux, Concurrent Real's Redhawk Linux, and various Yocto-based distributions.

Ecosystem can exploit platform expansion

NVIDIA said its Jetson partner ecosystem provides support, ranging from hardware, AI software, and application-design services to sensors, connectivity, and developer tools. Innovators in the NVIDIA Partner Network are vital role to providing the building blocks and sub-systems for many commercial products, it said.

Jetson partners can use the latest JetPack release to provide increased performance and capabilities, accelerating their time to market and expanding their customer base, claimed NVIDIA. Independent software vendor (ISV) partners will also be able to expand their offerings for Jetson, it said.

On Tuesday, Nov. 7, at 9 a.m. PT, a webinar on “Bringing Generative AI to Life with NVIDIA Jetson” will feature technical experts who will discuss topics including accelerated APIs and quantization methods for deploying LLMs and VLMs on Jetson, optimizing vision transformers with TensorRT, and more.

Interested developers can also sign up for early access to Metropolis.

Article topics

Email Sign Up