NVIDIA

Carter V2 mobile robot with semantic lidar in a warehouse scene.

Get news, papers, media and research delivered. Sign up for our free newsletters.

Stay up-to-date with news and resources you need to do your job. Research industry trends, compare companies and get weekly market intelligence with Robotics 24/7.

NVIDIA

Carter V2 mobile robot with semantic lidar in a warehouse scene.

Perceiving and understanding the world around them is a critical challenge for autonomous robots. In conjunction with ROS World 2021, NVIDIA Corp. announced its latest efforts to deliver performant perception technologies to the Robot Operating System developer community.

The Santa Clara, Calif.-based company said these initiatives will accelerate product development, improve performance, and simplify the task of incorporating computer vision and artificial intelligence functionality into ROS-based applications.

NVIDIA claimed that it will provide the highest-performing, real-time stereo odometry system as a ROS package. The Santa Clara, Calif.-based company also noted that all NVIDIA inference deep neural networks (DNNs) will be available on NVIDIA GPU Cloud (NGC) as a ROS package with examples for image segmentation and pose estimation.

In addition, the New Synthetic Data Generation (SDG) workflow will allow NVIDIA Isaac Sim users to create production-quality datasets at scale for vision AI training. The company claimed that Isaac Sim on Omniverse, with out-of-the box support for ROS, is the most developer-friendly release to date.

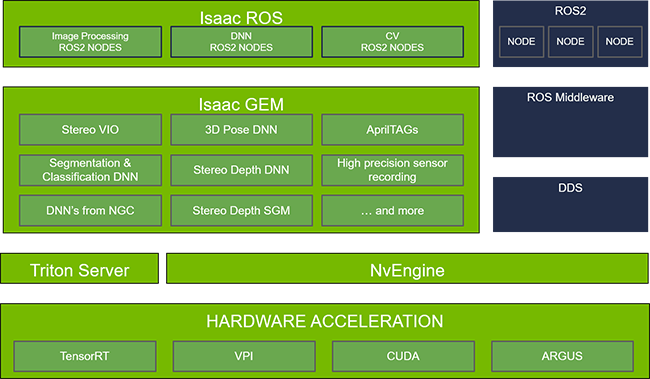

Isaac ROS GEMs provide packages that encompass image processing and computer vision, including DNN-based algorithms highly optimized for NVIDIA graphics processing units (GPUs) and the full Jetson lineup. We'll take a closer look at some of these GEMs below.

As autonomous machines move around in their environments, they must keep track of where they are. Visual odometry solves this problem by estimating where a camera is relative to its starting position. The Isaac ROS GEM for Stereo Visual Odometry provides this functionality to ROS developers.

This GEM offers the best accuracy for a real-time (>60fps @720p) stereo camera visual odometry solution, according to NVIDIA. Publicly available results based on the widely used KITTI database can be referenced from Karlsruhe Institute of Technology.

In addition to being very accurate, this GPU accelerated package runs extremely fast. In fact, it is now possible to run high-definition resolution in real time on a Jetson Xavier AGX, said NVIDIA.

ROS developers can use any of NVIDIA’s numerous inference models available on NGC or even provide their own DNNs with DNN Inference GEM, a set of ROS2 packages. Further tuning of pretrained models or optimizations of their own models can be done with the NVIDIA TAO Toolkit.

After optimization, TensorRT or Triton, NVIDIA’s inference server, deploy these packages. Optimal inference performance can be achieved with the nodes using TensorRT, NVIDIA’s high-performance inference software development kit (SDK). If TensorRT does not support the desired DNN model, then NVIDIA Triton should be used to deploy the model.

The GEM includes native support for U-Net and Deep Object Pose Estimation (DOPE). The U-Net package, based on TensorRT, can be used for generating semantic segmentation masks from images. And the DOPE package can be used for 3D pose estimation for all detected objects.

This tool is the fastest way to incorporate performant AI inference into a ROS application, according to NVIDIA.

Isaac Sim will be generally available in November 2021 as the most developer-friendly release to date, said NVIDIA. With numerous improvements in the user interface, performance, and building blocks, it will lead to better simulations getting built much faster, it claimed.

In addition, an improved ROS bridge and more ROS samples will enhance the developer experience, said the company.

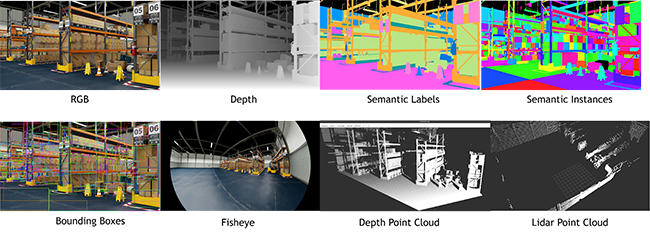

An autonomous robot requires large and diverse datasets to train the numerous AI models running its perception stack. Getting all of this training data from real-world scenarios is cost-prohibitive and in the corner cases, potentially dangerous.

The new synthetic data workflow provided with Isaac Sim is designed to build production quality datasets that address the safety and quality concerns around robots.

The developer building the datasets can control the stochastic distribution of the objects in the scene, the scene itself, the lighting, and the synthetic sensors. The developer also has the fine-grained control to ensure that important corner cases are included in the datasets.

Finally, this workflow supports versioning and debugging information so that datasets can be exactly reproduced for auditing and safety purposes.

Developers interested in getting started with Isaac ROS can learn more on NVIDIA's site.

Attendees at ROS World 21 are welcome to stop by its virtual booth, attend the NVIDIA ROS roundtable, watch the technical presentation on Isaac Sim, and more.

On Oct. 21 at 4:00 p.m. CT, Hammad Mazhar, lead simulation engineer at NVIDIA, will demonstrate how Isaac Sim can be used to simulate different ROS/ROS2-driven workflows.

Also today at 5:20 p.m. CT, the “Simulation Tools on ROS” panel will discuss the past, present, and future of simulation. Participants will include Liila Torabi, NVIDIA Isaac senior product manager, and developers of some of the world's largest robotics simulation products.

Gerard Andrews is a senior product marketing manager focused on the robotics developer community. Prior to joining NVIDIA, he worked at Cadence, where he was product marketing director. Andrews was responsible for product planning, marketing, and business development for licensable processor intellectual property at Cadence.

He holds an M.S. in electrical engineering from Georgia Institute of Technology and a B.S. in electrical engineering from Southern Methodist University.

Ultrasonic sensing enhances robotics perception

Cybernetix Ventures’ event kicks off Robotics Tech Week 2026 slate of events

Preview the manufacturing and warehouse components that will be on the…

Preview the manufacturing and warehouse robots and software that will be on…