The emergence of ubiquitous sensing and the Internet of Things (IoT) has sparked a migration of advanced processing, memory and communication resources to the network’s edge. In the process, these trends have caused a shift away from simple sensors to smart sensors, a move toward deploying greater intelligence at the data source.

Yet the chain reaction does not stop there. Developers and users have started to push for analytics and the ability to perform more complex applications at the sensor level, due to the presence of more robust systems at the edge. This means giving smart sensors more muscle. Most recently, moves to enhance sensor capability have led to implementations of various forms of artificial intelligence (AI) in smart sensing systems.

Keeping up with the evolution of sensors’ role at the edge and the need to bolster the devices’ capabilities have challenged design engineers to develop new systems and rethink the application of more established technologies.

Sensors’ Role at the Edge

The intertwining of smart sensing, analytics and artificial intelligence in the evolution of edge systems makes sense. Armed with greater intelligence, smart sensors offer significant advantages. By processing data locally, these systems reduce the bandwidth used to interact with the cloud, enhance security, increase safety in time-critical applications, and reduce latency and enable real-time decision-making.

“By essentially turning sensors into little computers, they become a great tool for the Internet of Things,” says Rochak Sharma, senior director, Predix product development, at GE Digital. “Not only are they able to gather the data, but they also convert that data into meaningful insights by using their data processing and analysis abilities.”

Although smart sensors have been in play for years, technology developers are still sorting out how sensing devices fit into the IoT environment. That said, a number of visions have surfaced.

One such view anticipates an inclusive, global fabric. “We expect the system to consist of many smart sensors that interact locally with each other—we call this the ‘extreme edge level’—but also with multiple edge devices that interact with each other and with local cloud servers, eventually up to the real global cloud server,” says Rudy Lauwereins, vice president of digital and user-centric solutions at IMEC.

Other industry leaders envision a system with more limited interaction between smart sensors and the cloud. “Having been extensively involved in fingerprint and heart-based sensing/access control, I can add that there is a desire not to rely on the cloud for security-sensitive applications and applications like automotive, where the cloud may not always be available,” says David Schie, CEO and cofounder of AIStorm.

New Demands on Smart Sensors

Before moving forward, designers must determine whether smart sensors have the resources to fully support advanced analytics, including the current selection of AI offerings.

A look at what edge systems need to perform more complex applications reveals that the limitations of processors, memory and long-term storage do not meet the future needs of smart sensor designers. Next-generation systems must have greater integration, speed, flexibility and energy efficiency.

Some contend that this feature set will require enhanced sensing technologies, new types of processors and compute architectures that break with traditional concepts and blur the lines between conventional computing system elements.

Sensor Fusion Challenges

Sensor fusion is one area requiring further development to help smart sensors better support edge analytics. As with many tasks performed at the edge, timing is everything, and fusion is best performed sooner rather than later.

“Smart sensors need to perform analytics directly on the raw data of multiple sensors to come to a decision instead of what happens today. Every sensor performs its own analysis and makes a decision, and that information is then compared with the conclusions of other sensors,” says Lauwereins. “Doing early fusion increases the accuracy of detection substantially.”

The challenges involved in improving sensor fusion—as with many other compute-intensive applications—highlight the need for new and better processor-memory architectures.

Choices and Tradeoffs

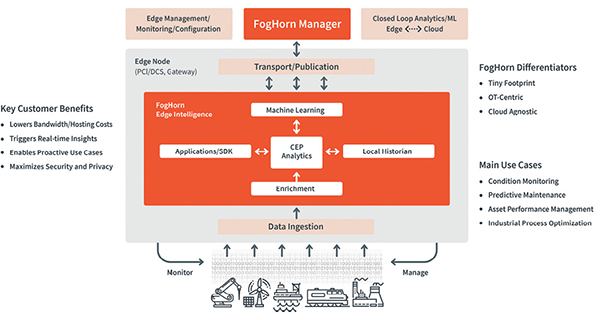

Today, digital processing architectures dominate the smart sensor and embedded system sectors, fielding such technologies as graphics processing units (GPUs), field-programmable gate arrays (FPGAs), application-specific integrated circuits (ASICs), systems on a chip (SoCs) and CPUs. When picking the most appropriate platform for a smart sensor application, the designer must juggle a number of criteria. These range from the type of analytics to be performed, environmental constraints and energy budgets to programming flexibility, throughput requirements and cost.

Each processing hardware has its own strengths and weaknesses, which forces the development team to make tradeoffs. A close look at the application’s requirements often steers the designers to the best choice.

“If latency is the key criteria, then the use of an FPGA or ASIC trumps GPU because they run on bare metal and so are more suitable for real-time AI,” says Ramya Ravichandar, vice president of product management at FogHorn. “In situations where power consumption is critical, FPGAs tend to consume less power than GPUs. In comparing ASICs and FPGAs, the former have longer production cycles and are more rigid in terms of accommodating design changes. In this regard, GPUs are probably the most flexible because one can manipulate software libraries and functions with much quicker turnaround time.”

In addition to having to contend with the tradeoffs, designers must also find ways to accommodate other issues. For example, GPUs are designed for uncorrelated data applications while sensors are suited for correlated-data applications. This conflict can impact processing efficiency.

“Research has shown a loss of 70% to 80% of processing capability for a GPU-based approach due to the difficulty of breaking up problems into chunks that can be sent to a huge number or parallel processors,” says Schie.

One constant in this area, however, is that the leading digital platforms are works in progress. Chipmakers serving the edge know the shortcomings of the latest generation of processors; these companies are constantly upgrading their offerings and tailoring processors to better serve the edge.

“SparkFun’s new edge development, based on a collaboration with Google and Ambiq, supports TensorFlow lite and is priced around $15,” says John Crupi, vice president of edge analytics at Greenwave Systems. “NVIDIA just announced a GPU-based edge board called Jetson Nano for $99. And Qualcomm’s AI Engine runs on its newer generation Snapdragon-based boards. Ultimately, the best processor type will depend on power requirements, cost and use cases.”

One Way to Eliminate the Bottleneck

As impressive as the advances in the digital sector are, some developers have begun to look beyond conventional technology to explore new types of processors and computing architectures.

In their search, designers increasingly place a premium on technologies that promise to deliver high throughput and excel at handling the large amounts of raw data generated by sensors at the edge. Furthermore, the introduction of AI has increased the need for greater processing speed.

At the heart of the problem lies the von Neumann bottleneck—a limitation on throughput caused by latency arising from the need to transfer programs and data from disk storage to the processor. This architecture has increasingly proven to be an obstacle in real-time performance and power consumption.

“Today’s GPUs come close to 100 TOPS, which is the compute level needed for real-time pedestrian recognition in video or for sensor fusion between RGB video and radar images,” says Lauwereins. “However, this comes at a power consumption of 200W to 300W, which is OK for a cloud server, but not for automotive, where you would need 10W to 20W. If you go to the mobile arena, the power envelope is 100mW up to max 1W, and for battery-operated IoT devices, the range is 1mW to 10mW.”

To sidestep these obstacles, a number of technology developers now advocate an approach called in-memory computing, which calls for storing data in RAM rather than in databases hosted on disks. In this approach, memory essentially performs double duty, handling storage and computation tasks.

This precludes the need to shuttle data between memory and processor, saving time and reducing energy consumption by as much as 90%. In-memory computing can cache large amounts of data, greatly speed response times and store session data, which helps to optimize performance.

“We need to evolve to a new type of computing platform, where we lose less power in moving data up and down to the compute engine,” says Lauwereins. “We need to compute in memory, where the data stays where it is stored and can be processed at that same place.”

Processing Goes Analog

Another approach to smart sensor and edge analytics implementations focuses on an area that promises greater efficiency: analog processing. The catalyst for these development efforts stems from the fact that the leading processor contenders—namely GPUs, FPGAs and ASICs—require a digital data format.

The catch here is that sensor output naturally comes in analog form. This means that digital systems require data to go through an analog-to-digital conversion process, which can increase the system’s latency, power consumption and cost.

To address this problem—and to facilitate inclusion of AI in smart sensors—companies like Syntiant and AIStorm have chosen to provide analog data chips.

In general, analog processors solve problems by representing variables and constants with voltage. The systems manipulate these quantities using electronic circuits to perform math functions. For example, designers can use analog multipliers, exploiting the current/voltage relationship of the transistor to perform math. They can also use memristors and resistive RAM, where resistances are programmed as weights.

Another technique involves using switched capacitor circuits, where the system manipulates capacitor arrays to produce gain. In the case of AIStorm, the company has patented a charge domain approach, where the system multiplies electrons rather than voltage or current.

AIStorm has designed its AI-in-Sensor SoC to be integrated in smart sensors that target low-power applications. The SoC aims to achieve high throughput and energy efficiency by processing sensor data in its raw analog form without encoding it in a digital format. The company claims that the analog data can then be used to train AI and machine learning models for a wide range of different tasks, achieving greater efficiency than a digital system.

Then There Is Memory

Paralleling moves to take processing into the analog realm, some edge analytics and AI developers advocate implementing analog memory to achieve greater performance and energy efficiency.

“This approach stores information in an analog way,” says AIStorm’s Schie. “For example, analog floating gate memory can store the equivalent of eight bits in the space that digital uses to store a single bit. Basically, charge is added or removed, and the actual charge level is considered instead of a digital zero or one.”

Examples of this memory include the y-flash from Towerjazz and SONOS embedded flash memory from Cypress Semiconductor.

Tools for the Edge

Smart sensor development that can perform edge analytics, including AI, presents a unique set of hardware challenges. That said, creating the software for smart sensors can be very complex. Even engineers with a high-level software training experience difficulties.

The edge is very different in terms of servers because the memory available is extremely limited and the data is not correlated. As such, architectures and tools that work well in an environment of near-limitless memory and processing, such as the cloud, do not transplant well.

“The key is to understand that edge computing technology has evolved to the point that focus can be placed on the analytic instead of starting at the board support package and building an entire software stack in-house,” says Joel Markham, chief engineer for embedded computing at GE Research.

“The use of container technology, for example, to quantize applications into microservices offers a key benefit to those wanting to deploy smart sensors in that the care and feeding of these systems can be captured in reusable modules that can service the smart sensor and the application throughout its life-cycle. This use of micro-services also means that the rest of the edge analytic can be assembled, tested and deployed more effectively in just about any measure you wish to impose.”

Complementing this approach, myriad tools from the various platform vendors can create the basic building blocks of smart sensor applications. For example, open-source tools can train a given model or build an analytic for subsequent optimization and execution on the selected hardware.

Some of these tools bridge the gap between existing development platforms and the new demands arising in smart sensor, analytics, and AI design. “There are many tools already being used to develop and expand our understanding and use of AI and machine learning,” says Wiren Perera, senior director of IoT strategy at ON Semiconductor. “In terms of the edge, cross-compilers enable the use of existing software algorithms/IP. These are perhaps one of the most relevant and important today.”

A new generation of such tools is also in the works that takes this approach one step further. “Code collaboration tools and compilers are needed, and companies such as SensiML, which was acquired by Quicklogic, are starting to explore this space with automatic code generation tools virtualized on powerful servers that generate code that is then pushed to the edge,” says Marcellino Gemelli, director of global business development at Bosch Sensortec.

Perspectives and Prospects

All of these hardware and software challenges make smart sensor development a daunting task. Even with today’s tools, designers can feel hamstrung. Perhaps the greatest challenge for the designer, however, is achieving the right state of mind.

“In many cases, the challenge is how developers think about the problem when faced with an underlying platform that gives them this many degrees of freedom,” says GE’s Markham. “Developers are going to need to expand their thinking.

About the Author

Follow Robotics 24/7 on Linkedin

Article topics

Email Sign Up