Editors’ Picks

Found in Robotics News & Content, with a score of 11.27

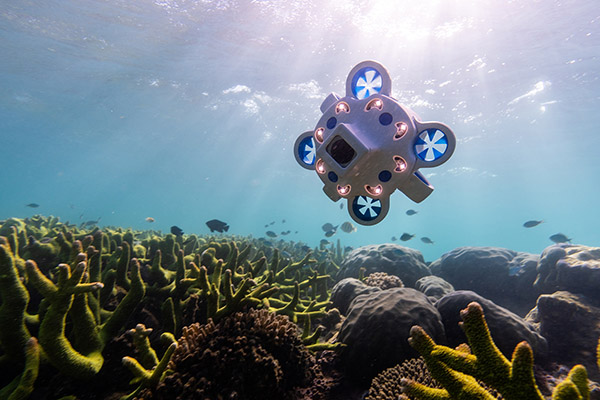

…navigational, sonar, propulsion, and data-capture technologies with sophisticated artificial neural network (ANN) intelligence, said Advanced Navigation. With support from research institutions including the University of Western Australia (UWA), Curtin University, and philanthropic organization Minderoo, Advanced Navigation said it is continuing to establish sustainable technologies to foster the growth of the blue economy, nationally and internationally. “It’s exciting to see Advanced Navigation continue to grow its team of engineers in Western Australia,” said Justin Geldard, a coastal and ocean researcher at the UWA Ocean Institute. “At UWA, we are researching how natural and artificial reef structures can protect coastlines by dissipating…

Found in Robotics News & Content, with a score of 14.35

…backed by the company’s dedicated service and support teams. Neural network recognizes billions of containers AMP added that its neural network has recognized more than 75 billion containers and packaging types in real-world conditions annually. AMP Vision is a modular computer vision system that helps operators understand material flow throughout key stages of sorting operations. When integrated with AMP Clarity, the company’s portal for recycling data and insights and robot optimization, customers can use AMP Vision to monitor real-time material characterization and performance measurement throughout a facility. AMP Vortex is designed to tackle film contamination and improve recovery of film…

Found in Robotics News & Content, with a score of 11.71

…released a whitepaper explaining how Thermal Ranger uses convolutional neural networks (CNNs) to locate and identify the thermal signatures of pedestrians and animals in the dark with a single infrared camera. “The technology stack includes everything from pre-processing, sensor fusion, localization data, and decision making to the actuator system,” Gershman told Robotics 24/7. “We built the sensor and reference camera system and the perception stack for that sensor-specific interpretation of the data. We can get a true 3D response from 2D images, and we've built a high-definition digital thermal sensor.” The CNN builds a disparity map, like stereo vision, in…

Found in Robotics News & Content, with a score of 8.01

…where learning helps because we can run a lightweight neural network and train it to process noisy sensor data observed by the moving robot.” “This is in stark contrast with most robots today,” he added. “Typically, a robot arm is mounted on a fixed base and sits on a workbench with a giant computer plugged right into it. Neither the computer nor the sensors are in the robotic arm! So the whole thing is weighty, hard to move around.” CSAIL's Improbable AI Lab has developed DribbleBot. Source: MIT More work to do There's still a long way to go in…

Found in Robotics News & Content, with a score of 17.00

…itself. She first used reinforcement learning to train a neural network, which in turn taught the robot to use extrinsic dexterity to grasp objects in its surrounding environment. In this case, the robot manipulates the object in its gripper with the help of the wall or other vertical surface. Previous research on extrinsic dexterity and robotics often designed systems for grasping objects based on assumptions about how a robot would contact an item. Sometimes, these systems also required more complicated hands or robots. Zhou used AI to develop a system with fewer hardware restrictions that could work for many different…

Found in Robotics News & Content, with a score of 23.19

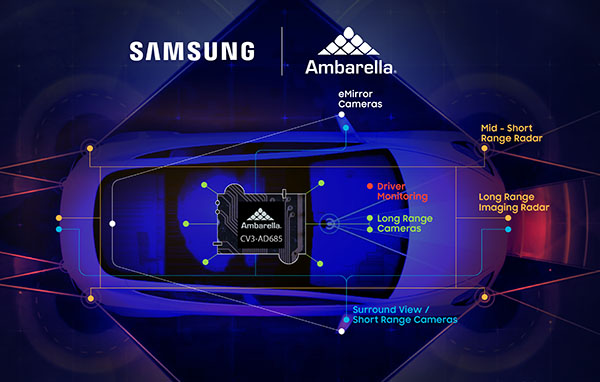

…video compression, advanced image and radar processing, and deep neural network processing to enable intelligent perception, fusion, and planning. The CV3-AD685 is the first production version of Ambarella’s CV3-AD family of automotive AI central domain controllers, according to the company. Tier 1 automotive suppliers have announced that they will offer systems using the CV3-AD product family, it said. Ambarella said the SoC integrates its next-generation CVflow AI engine, which includes neural network processing that is 20 times faster than the previous generation of CV2 SoCs. The CV3-AD685 also provides general-vector and neural-vector processing capabilities to deliver the overall performance required…

Found in Robotics News & Content, with a score of 24.44

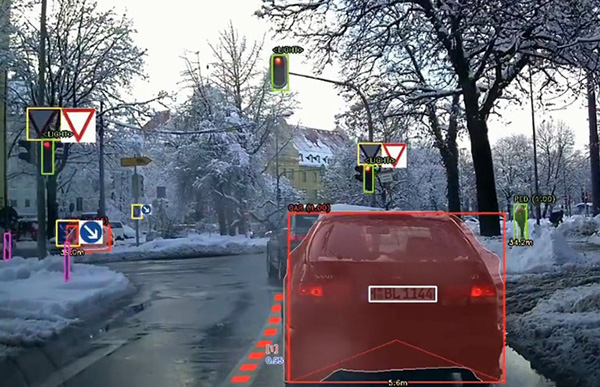

…experience and road safety,” he said. SVNet runs deep neural networking on TI processor SVNet was the first deep neural network to run deep learning-based object-detection software using the TDA2SX processor, claimed STRADVISION. It now also provides deep neural networking across the TI TDA4VM processor family, the company said. Texas Instruments said its TDA4 processor family offers an entry-level camera system for high-volume L2 ADAS applications and beyond. The processor is purpose-built for advanced driver-assistance systems (ADAS) and autonomous vehicle (AV) applications and builds on over a decade of experience in the ADAS processor market, said the company. SVNet’s implementation…

Found in Robotics News & Content, with a score of 14.64

…CPU and the 4.6 TOPS (trillion operations per second) neural processor unit (NPU) in the eCV1 chip, which provides dedicated machine learning instructions, a patented neural network engine, and Tensor Processing Fabric Flexible image and computer vision processing for domain-specific applications The new platform also supplies developers with H.264 compression for easy video streaming, said the company. XINK's Crypto Engine includes ARM's TrustZone for security, as well as a pseudo-random number generator and other encryption to protect user data through hardware-based isolation. eYs3D exhibits at CES 2023 eYs3D Microelectronics demonstrated the XINK development framework at CES Booth 15769 in the…

Found in Robotics News & Content, with a score of 17.12

…and even writing code. “Language models are a giant neural network that has read the entire Internet,” Schluntz told Robotics 24/7. “They were given the simple task of predicting the next word, and then that algorithm became 1,000 times larger than any other neural network. They can now predict answers to questions.” “However, what AI is good at is different from what we expected it to be good at,” he noted. “As OpenAI opened up the API, I've played with it. It can pass a Turing Test, but it still makes mistakes. What's really exciting is using language models as…

Found in Robotics News & Content, with a score of 10.59

…the motor skills it learned during training in a neural network that the researchers copied to the real robot. This approach did not require any hand engineering of the robot’s movements — a departure from traditional methods. Most robotic systems use cameras to create a map of the surrounding environment and use that map to plan movements before executing them. The process is slow and can often falter due to inherent fuzziness, inaccuracies, or misperceptions in the mapping stage that affect the subsequent planning and movements. Mapping and planning are useful in systems focused on high-level control but are not…

Found in Robotics News & Content, with a score of 13.61

…avoid possible collisions in traffic. Clevon uses multiple deep neural networks, fusing camera and radar data to allow its vehicles to detect and identify the dynamic environment. It said its technical team in Estonia is constantly developing and improving the CLEVON 1, so one teleoperator could eventually manage five to 10 vehicles simultaneously. The CLEVON 1 communicates via 4G with the operator in the control room and includes redunandcies for safety. “Reliable data connectivity is crucial for this technology,” explained Vancauwenberghe. “To be sure, the vehicle has two SIM cards from two different providers, so there is always a network…

Found in Robotics News & Content, with a score of 19.38

…rather than tactile ones, he said. “But with large neural nets, if cameras are occluded, hundreds of sensors in fingertips and palms could take over,” McMillen said. “Vision could get the robot in the ballpark, with a smooth transition to touches. As artificial intelligence has continued to advance, there really has been a leap forward.” The latest humanoid systems can incorporate cameras, microphones, and motion planning with one-hundredth of the input nodes, he said. BeBop seeks levels of precision, reliability McMillen came into haptic sensing through music. “We started working with musical instruments and fabric sensors 14 years ago,” he…