Labor shortages have led to increased interest in automation, but designing, building, and scaling commercial robots has been a challenge. Brain Corp. said its third-generation autonomy platform can help developers bring their innovations to market more quickly.

The San Diego-based company claimed that its software powers more than 26,000 autonomous mobile robots (AMRs) worldwide, “representing the largest fleet of its kind in the world.” In January, the company launched BrainOS Inventory Insights, a proprietary offering to help retailers identify out-of-stock and low-stock events, planogram compliance, and whether price tags are correct from data collected by BrainOS-powered inventory-scanning AMRs.

Jarad Cannon, chief technology officer at Brain Corp, has worked at the company for nearly six years. He told Robotics 24/7 that he has helped build out Brain's software engineering team as it developed cleaning, shelf-scanning, and tugger products.

“I led more and more parts of our software engineering operation, and then when I was appointed to CTO, I worked with other groups, since robotics is cross-functional,” Cannon said. “I'm now running all of the engineering functions—software, hardware, quality assurance, technical operations, program management groups, and some manufacturing.”

Brain Corp recently worked with manufacturer Flex to create the “Gen 3” modular robot architecture for accelerating AMR development. Cannon explained the company's approach to helping robotics developers.

Gen 3 offers improved AMR performance

Can you give some examples of how the advances in Gen 3 will affect operations of BrainOS-powered robots?

Cannon: We've developed our products with a platform mindset—we wanted to reuse software as much as possible, and we've learned a lot.

In Gen 3, we crystallize the learnings in Gen 2. There's more modularity, and we consolidated on single compute platform. Now compute is fairly affordable and can be used in wide range of applications.

Does Gen3 require new sensors or processors for improved performance?

Cannon: On the sensor side, we had used wide range of vendors, including 3D and 2D cameras and lidars. Luckily, with pressure from the self-driving car industry, costs came down, and there is more data from sensors.

With building around core compute capabilities and consolidating sensors, we've simplified our platform from the hardware and software side. Plus, we've had a lot of customer feedback so things run and execute smoothly from the moment the customer turns on the robot.

There are still hurdles to overcome on a daily basis, but we've taken the most direct approach, building in docking for 24/7 operations and remote-control capabilities. BrainOS can form better models of the world, so robots are able to navigate better than before.

We're formalizing relationships with specific vendors. We're also evaluating new sensor modalities and keeping our options open.

Brain Corp consults with customers, partners

What feedback did you get from customers—and, ultimately, end users—that led to these advancements?

Cannon: Typically, it's a collection of things because of the wide range of markets Brain Corp addresses. Some customers are more tech-forward, and some are hesitant.

The same solution may work great with one customer, but others may need more of a collaboration. It's a matter of change mangement. We're offering more consulting services to help them get the best use of their machines.

It's often simple stuff, which may look minor from an engineering standpoint. But these are practical things customers have to deal with on a daily basis to get their machines to execute on a daily basis. They include things like simplifying docking and updating maps to adjust to changes in the environment.

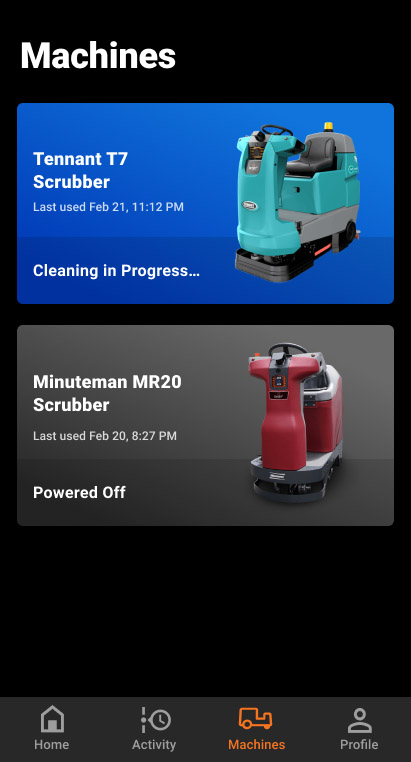

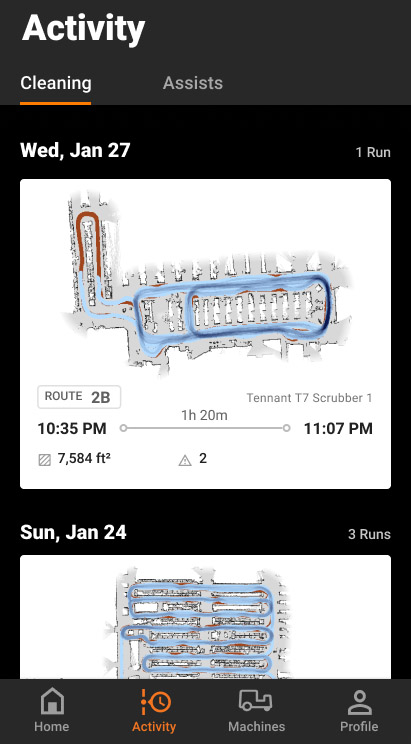

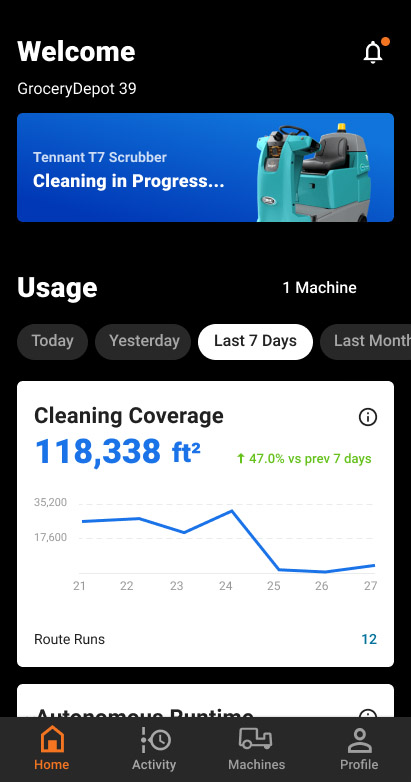

Who's responsible for monitoring and maintaining BrainOS-driven fleets of AMRs?

Cannon: It's ourselves or partners. Brain Corp has multiple operations teams and provide top-level monitoring and staged rollouts. For specific accounts, there is targeted monitoring of day-to-day operations—it's a scalable solution.

We're moving from reactive to proactive maintenance. We've started building in technology to predict from an aggregate of data we have access to with the scale of our fleet. It can detect patterns of a machine acting up that our service partners can then address.

Software updates enable smarter robots

With the announcement of Brain's Gen 3 platform, what's the cadence of your upgrade cycles?

Cannon: Gen 2 is not at its end of life; it's not going away. Brain Corp will support another five years of software updates and maintenance. Gen 3 complements Gen 2 fleets.

Specific timelines depend on the product line. Products still heaviliy in development get updates more frequently—every two weeks—typically during pilots with customers.

More established fleets, such as floor scrubbers, get updates every quarter.

Are the enhancements more around individual human-robot interactions or fleets?

Cannon: On the human interaction side, it's amazing to see in larger ecosystem how commoditized capabilities are becoming. A robot has to have people detection, not just recognition.

To train computer vision algorithms, we needed a team that understood deep learning. Now, you can just grab models, and some vendors lease capabilities in the hardware stack, so there is more performance out of the box.

We've optimized our software for the hardware we're using, and it's much easier to build intelligence into robots. There are dozens of capabilities now.

Developers can save time, money

How does Gen3 enable easier product development? Can you give an example of how a customer is using or will use it differently than earlier versions?

Cannon: Internally, we’ve built a robot development kit taking learnings from Gen 2. We looked at bespoke applications and extracted out commonalities and core reusable capabilities.

It's a much more substantial architectural overhaul, and our redesigned model includes core building blocks. We developed them in a generic way for the robot development kit, then specific products by vertical—scrubbing, vacuuming, or shelf scanning.

In Gen 3, developers can build a prototype in one to two days, down from two weeks. That time is a significiant side of the investment in development.

Are the software and hardware modules that Brain Corp is developing with Flex oriented around specific applications or tasks? What industrial pain points will they address?

Cannon: We refer to Gen 3 machines right now as a modular hardware and software reference kit. It cuts down on need for custom design, which is very capital-intensive.

The base machine can demonstrate mapping, navingation, and perception. You can add a scanning tower, cameras for computer-based scanners, RFID, or a virtual tour camera on top, both at the hardware and software layers. You just need to turn on some configuration flags for extrinsics and instantiate the correct drivers.

For new applications, part of Brain Corp's intent is for designers to be able to pivot without paying long iteration costs.

Brain Corp stays the course

As the software stack for AMRs continues to evolve, how does Brain Corp intend to maintain its leadership?

Cannon: We have the lead in penetration of markets and data. While many competitors are starting to show up, we have the visibility to move quickly and leverage benchmarks.

For simulation, we're setting up thousands of benchmarks for navigation, mapping, and localization so BrainOS robots can move with confidence.

You've been CTO since December 2022; what are some of your goals in your new role?

Cannon: I'm keeping them to myself for now, but I want to keep our team focused. We know what we need to execute on this year, and there are lots of cool, shiny things [in the robotics space].

About the Author

Follow Robotics 24/7 on Linkedin

Article topics

Email Sign Up